Configuring Anchore

Configuring Anchore Enterprise starts with configuring each of the core services. Anchore Enterprise deployments using docker compose for trials or Helm for production are designed to run by default with no modifications necessary to get started. Although, many options are available to tune your production deployment to fit your needs.

About Configuring Anchore Enterprise

All system services (except the UI, which has its own configuration) require a single configuration which is read from /config/config.yaml when each service starts up. Settings in this file are mostly related to static settings that are fundamental to the deployment of system services. They are most often updated when the system is being initially tuned for a deployment. They may, infrequently, need to be updated after they have been set as appropriate for any given deployment.

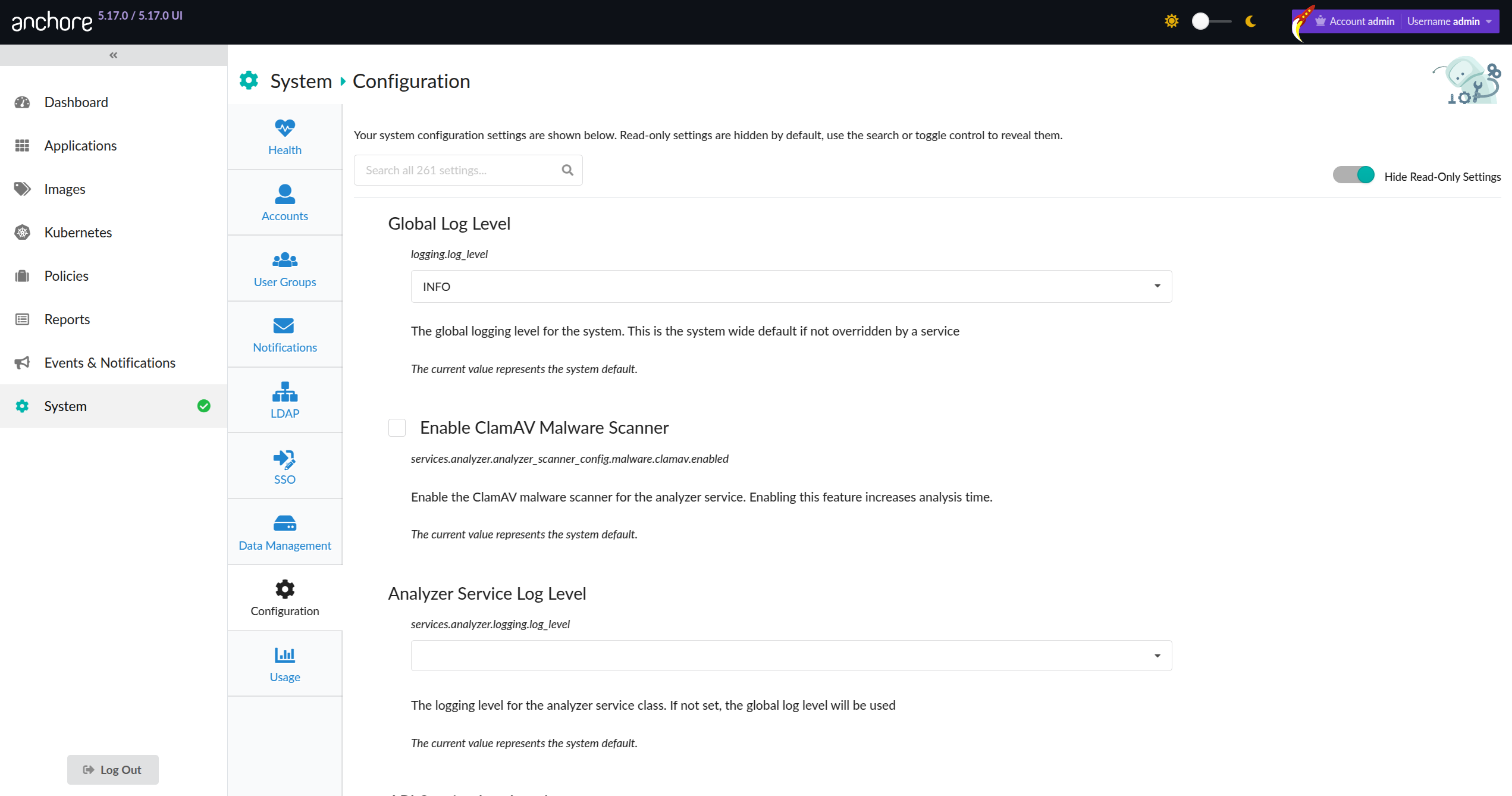

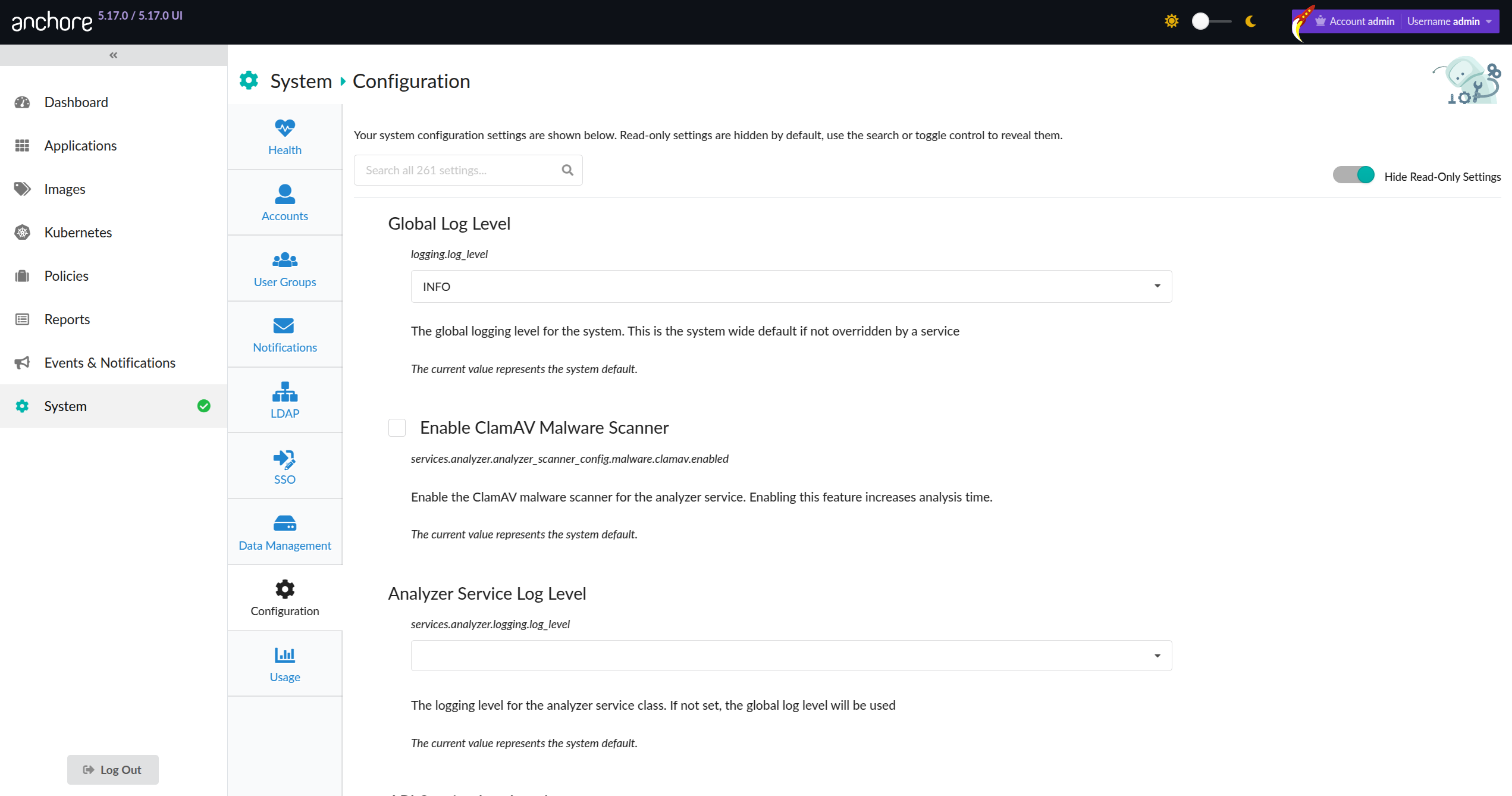

Sections of the UI configuration settings can now be found from within the UI itself! Navigate to the ‘System’ heading in the sidebar and then select ‘Configuration’. Here you will see some of the exposed configuration options:

By default, Anchore Enterprise includes a config.yaml that is functional out-of-the-box, with some parameters set to an environment variable for common site-specific settings. These settings are then set either in docker-compose.yaml, by the Helm chart, or as appropriate for other orchestration/deployment tools.

When deploying Anchore Enterprise using the Helm chart, you can configure it by modifying the anchoreConfig section in your values file. This section corresponds to the default config.yaml file included in the Anchore Enterprise container image. The values file serves to override the default configurations and should be modified to suit your deployment.

1 - General Configuration

Initial Configuration

A single configuration file config.yaml is required to run Anchore - by default, this file is embedded in the Enterprise container image, located in /config/config.yaml. The default configuration file is provided as a way to get started, which is functional out of the box, without modification, when combined with either the Helm method or docker compose method of installing Enterprise. The default configuration is set up to use environment variable substitutions so that configuration values can be controlled by setting the corresponding environment variables at deployment time (see Using Environment Variables in Anchore.

Each environment variable (starting with ANCHORE_) in the default config.yaml is set (either the baseline as set in the Dockerfile, or an override in docker compose or Helm or through a system default) to ensure that the system comes up with a fully populated configuration.

Some examples of useful initial settings follow.

- Default admin credentials: A default admin email and password is required to be defined in the catalog service for the initial bootstrap of enterprise to succeed, which are both set through the default config file using the ANCHORE_ADMIN_PASSWORD and ANCHORE_ADMIN_EMAIL environment variables respectively. The system defines a default email admin@myanchore, but does not define a default password. If using the default config file, the user must set a value for ANCHORE_ADMIN_PASSWORD in order to succeed the initial bootstrap of the system. To set the default password or to override the default email, simply add overrides for ANCHORE_ADMIN_PASSWORD and ANCHORE_ADMIN_EMAIL environment variables, set to your preferred values prior to deploying Anchore Enterprise. After the initial bootstrap, this can be removed if desired.

default_admin_password: '${ANCHORE_ADMIN_PASSWORD}'

default_admin_email: '${ANCHORE_ADMIN_EMAIL}'

- Log level: Anchore Enterprise is configured to run at the INFO log level by default. The full set of options are CRITICAL, ERROR, WARNING, INFO, and DEBUG (in ascending order of log output verbosity). Admin accounts can set the system log level through the dashboard, the dashboard allows differing per-service log levels. Changes through the dashboard do not require a system restart. The log level can also be set globally (across all services) with an override for ANCHORE_LOG_LEVEL prior to deploying Anchore Enterprise, this approach requires a system restart to take effect.

log_level: '${ANCHORE_LOG_LEVEL}'

- Postgres Database: Anchore Enterprise requires access to a PostgreSQL database to operate. The database can be run as a container with a persistent volume or outside of your container environment (which is set up automatically if the example docker-compose.yaml is used). If you wish to use an external Postgres Database, the elements of the connection string in the config.yaml can be specified as environment variable overrides. The default configuration is set up to connect to a postgres DB that is deployed alongside Anchore Enterprise Services when using docker-compose or Helm, to the internal host anchore-db on port 5432 using username postgres with password mysecretpassword and db postgres. If an external database service is being used then you will need to provide the use, password, host, port and DB name environment variables, as shown below.

db_connect: 'postgresql://${ANCHORE_DB_USER}:${ANCHORE_DB_PASSWORD}@${ANCHORE_DB_HOST}:${ANCHORE_DB_PORT}/${ANCHORE_DB_NAME}'

Manual Configuration File Override

While Anchore Enterprise is set up to run out of the box without modifications, and many useful values can be overriden using environment variables as described above, one can always opt to have full control over the configuration by providing a config.yaml file explicitly, typically by generating the file and making it available from an external mount/configmap/etc. at deployment time. A good method to start if you wish to provide your own config.yaml is to extract the default config.yaml from the Anchore Enterprise container image, modify it, and then override the embedded /config/config.yaml at deployment time. For example:

- Extract the default config file from the anchore/enterprise container image:

docker pull docker.io/anchore/enterprise:v5.X.X

docker create --name ae docker.io/anchore/enterprise:v5.X.X

docker cp ae:/config/config.yaml ./my_config.yaml

docker rm ae

Modify the configuration file to your liking.

Set up your deployment to override the embedded /config/config.yaml at run time (below example shows how to achieve this with docker compose). Edit the docker-compose.yaml to include a volume mount that mounts your my_config.yaml over the embedded /config/config.yaml, resulting in a volume section for each Anchore Enterprise service definition.

...

api:

...

volumes:

- /path/to/my_config.yaml:/config/config.yaml:z

...

catalog:

...

volumes:

- /path/to/my_config.yaml:/config/config.yaml:z

...

simpleq:

...

volumes:

- /path/to/my_config.yaml:/config/config.yaml:z

...

policy-engine:

...

volumes:

- /path/to/my_config.yaml:/config/config.yaml:z

...

analyzer:

...

volumes:

- /path/to/my_config.yaml:/config/config.yaml:z

...

Now, each service will come up with your external my_config.yaml mounted over the embedded /config/config.yaml.

1.1 - API Accessible Configuration

Anchore Enterprise provides the ability to view the majority of system configurations via the API.

A select number of configurations can also be modified through the API, or through the System Configuration tab from the UI.

This enables control of system behaviours and characteristics in a programmatic way without needing to restart your deployment.

Note

⚠️ At this time, only a few configurations are modifiable through this new API-backed mechanism yet. Full configuration continues to be achievable through the existing config file mechanism.Configuration Provenance

Configuration values are established by a priority system which derives the final running value based on the location from

which the value was read. This provenance priority mechanism enables backwards compatibility and increased security

for customers wishing tighter control of their systems runtime.

1. Config File Source

Any configuration variable which is declared within an on-disk configuration file will be honored first (ie. via a Helm values.yaml).

These configuration variables will be read-only through the API and UI. Any configuration that is currently set for your deployment

will be preserved.

Note

⚠️ The use of environment variables for configuration values are treated with the same provenance as config file.2. API Config Source

When a configuration variable is not declared in a config file and is one of the select few that are allowed to be modified via the API,

its value will be stored in the system database. These configuration variables can be modified via the API and the changes will be reflected

within the deployment within a short time without having to restart your deployment.

The following configuration variables are modifiable through the API:

services.analyzer.analyzer_scanner_config.malware.clamav.enabledlogging.log_levelservices.apiext.logging.log_levelservices.analyzer.logging.log_levelservices.catalog.logging.log_levelservices.policy_engine.logging.log_levelservices.policy_engine.vulnerabilities.extended_support.rhel.enabledservices.policy_engine.vulnerabilities.extended_support.rhel.versionsservices.simplequeue.logging.log_levelservices.notifications.logging.log_levelservices.data_syncer.logging.log_levelservices.reports.logging.log_levelservices.reports_worker.logging.log_level

3. Anchore Enterprise Defaults

If a configuration variable has not been declared by any other source it inherits the default system value. These are values chosen by Anchore

as safe and functional defaults.

For the majority of deployments we recommend you simply use the default settings for most values as they have been calibrated to

offer the best experience out of the box.

⚠️ Note: These values may change from release to release. Notable changes will be communicated through Release Notes. Significant

system behaviour changes will not be performed between minor releases. If you wish to ensure a default is not changed, “pin” the

value either by providing it explicitly in the config file or setting it directly via the API (when available).

Blocked by Config File

Attempts to set a configurations through the API may return a blocked_by_config_file error.

This error is raised when a configuration variable is not API editable as it is set in a config file.

Locations to inspect for a pinned config value are:

- the Helm attached

values.yaml file (continue reading for further instructions) - the compose attached config.yaml file

- the environment variables being passed into the container

Disabling Helm Chart Configs

For Helm users, to make a value configurable through the API, set the value in the attached values.yaml to "<ALLOW_API_CONFIGURATION>"

As an example:

services:

analyzer:

malware:

clamav:

enabled: "<ALLOW_API_CONFIGURATION>"

API Configurations Refresh

Each running container will refresh its internal configuration state approximately every 30 seconds. After making a change please allow

a minute to elapse for the changes to flush out to all running nodes.

Config File Configurations Refresh

Changes made to a mounted config file will be detected and will cause a system configuration refresh.

If a configuration variable that is changed requires a system restart a log message will be printed to that effect.

If a system is pending restart, none of the changes will take effect until

this is performed. This config file watcher includes a mounted .env file if in use.

Config Validation

In a future major release of Anchore Enterprise, we will be enabling a strict mode type and data validation for the configuration.

This will result in any non-compliant Enterprise deployments failing to boot. For now this is not enforced.

For awareness, validation of your configuration variables and values will occur during system boot.

If any configuration fails this validation, a log message mentioning lenient mode will be published.

This will be accompanied by details on which entries have failed.

To avoid system downtime in a future major release, consider resolving these issues today.

Secret Values

Secret values cannot be read through the API, they will return as the string <redacted> it is however possible to update them.

It is not possible to set any secret value to the string <redacted>.

Security Permissions

Config file permissions have not changed, they are editable by the system deployer.

API requests to make changes to configuration will only be accepted if coming from an Anchore User with system-admin permissions.

1.2 - Content Hints

For an overview of the content hints and overrides features, see the feature overview

Enabling Content Hints

This feature is disabled by default to ensure that images may not exercise this feature without the admin’s explicit approval. This page will explain how to enable content hints for both Docker Compose and Kubernetes (Helm) deployments. Additionally, if you are performing distributed analysis of images and require hints detection you will ALSO need to modify your AnchoreCTL configuration.

Hints cannot be used to modify the findings of Anchore Enterprise’s analyzer beyond adding new packages to the report. If a user specifies a package in the content hints file that is found by Anchore Enterprise’s image analyzers, the hint is ignored and a warning message is logged to notify the user of the conflict

🐳 Docker Compose

To configure your Docker Compose deployment and enable content hints you have two options.

If you supply a config.yaml to the analyzer(s) in your Docker Compose file, then set the enable_hints: true setting in the analyzer service section of config.yaml file.

If you don’t supply a config.yaml, you can add an environment variable ANCHORE_HINTS_ENABLED=true on the analyzer service.

This will also enable content hints detection during centralized analysis.

☸️ Kubernetes (Helm)

To configure your Kubernetes (Helm) deployment and enable content hints, you can update your values file and set anchoreConfig.analyzer.enable_hints: true. This will also enable content hints detection during centralized analysis.

anchoreConfig:

analyzer:

enable_hints: true

AnchoreCTL Distributed

In addition to enabling content hints in your deployment, you may also need to enable content hints detection for distributed analysis.

This can be achieved by editing your AnchoreCTL configuration, for example ~/anchorectl.yaml as shown below. This enables the file cataloger which will add some computational overhead.

---

file-contents:

cataloger:

enabled: true

scope: squashed

skip-files-above-size: 1048576

globs: ['/anchore_hints.json']

1.3 - Environment Variables

Environment variable references may be used in the Anchore config.yaml file to set values that need to be configurable during deployment.

Using this mechanism a common configuration file can be used with multiple Anchore instances with key values being passed using environment variables.

The config.yaml configuration file is read by Anchore and any references to variables prefixed with ANCHORE will be replaced by the value of the matching environment variable.

For example in the sample configuration file the host_id parameter is set be appending the ANCHORE_HOST_ID variable to the string dockerhostid

host_id: 'dockerhostid-${ANCHORE_HOST_ID}'

Notes:

- Only variables prefixed with ANCHORE will be replaced

- If an environment variable is referenced in the configuration file but not set in the environment then a warning will be logged

- It is recommend to use curly braces, for example ${ANCHORE_PARAM} to avoid potentially ambiguous cases

Passing Environment Variables as a File

Environment Variables may also be passed as a file contained key value pairs.

ANCHORE_HOST_ID=myservice1

ANCHORE_LOG_LEVEL=DEBUG

The system will check for an environment variable named ANCHORE_ENV_FILE if this variable is set then Anchore will attempt to read a file at the location specified in this variable.

The Anchore environment file is read before any other Anchore environment variables so any ANCHORE variables passed in the environment will override the values set in the environment file.

2 - Enterprise UI Configuration

The Enterprise UI service has some static configuration options that are read

from /config/config-ui.yaml inside the UI container image when the system

starts up.

The configuration is designed to not require any modification when using the

quickstart (docker compose) or production (Helm) methods of deploying Anchore

Enterprise. If modifications are desired, the options, their meanings, and

environment overrides are listed below for reference:

The (required) license_path key specifies the location of the local system

folder containing the license.yaml license file required by the Anchore

Enterprise UI web service for product activation. This value can be overridden

by using the ANCHORE_LICENSE_PATH environment variable.

The (required) enterprise_uri key specifies the address of the Anchore

Enterprise service. The value must be a string containing a properly-formed

‘http’ or ‘https’ URI. This value can be overridden by using the

ANCHORE_ENTERPRISE_URI environment variable.

enterprise_uri: 'http://api:8228/v2'

The (required) redis_uri key specifies the address of the Redis service. The

value must be a string containing a properly-formed redis URI such as

redis://ui-redis. If encryption in transit is in use with an external Redis the

connection string should be rediss:// instead of redis://. Note that the

default configuration uses the Redis Serialization Protocol (RESP). This

value can be overridden by using the ANCHORE_REDIS_URI environment variable.

redis_uri: 'redis://ui-redis:6379'

The (required) appdb_uri key specifies the location and credentials for the

postgres DB endpoint used by the UI. The value must contain the host, port, DB

user, DB password, and DB name. This value can be overridden by using the

ANCHORE_APPDB_URI environment variable.

appdb_uri: 'postgres://<db-user>:<db-pass>@<db-host>:<db-port>/<db-name>'

The (required) reports_uri key specifies the address of the Reports service.

The value must be a string containing a properly-formed ‘http’ or ‘https’ URI

and can be overridden by using the ANCHORE_REPORTS_URI environment variable.

Note that the presence of an uncommented reports_uri key in this file (even

if unset, or set with an invalid value) instructs the Anchore Enterprise UI

web service that the Reports feature must be enabled.

reports_uri: 'http://reports:8228/v2'

The (optional) enable_ssl key specifies if SSL operations should be enabled

within in the web app runtime. When this value is set to True, secure

cookies will be used with a SameSite value of None. The value must be a

Boolean, and defaults to False if unset.

Note: Only enable this property if your UI deployment configured to run

within an SSL-enabled environment (for example, behind a reverse proxy, in the

presence of signed certs etc.)

This value can be overridden by using the ANCHORE_ENABLE_SSL environment

variable.

The (optional) enable_proxy key specifies whether to trust a reverse proxy

when setting secure cookies (via the X-Forwarded-Proto header). The value

must be a Boolean, and defaults to False if unset. In addition, SSL must be

enabled for this to work. This value can be overridden by using the

ANCHORE_ENABLE_PROXY environment variable.

The (optional) allow_shared_login key specifies if a single set of user

credentials can be used to start multiple Anchore Enterprise UI sessions; for

example, by multiple users across different systems, or by a single user on a

single system across multiple browsers.

When set to False, only one session per credential is permitted at a time,

and logging in will invalidate any other sessions that are using the same set

of credentials. If this property is unset, or is set to anything other than a

Boolean, the web service will default to True.

Note that setting this property to False does not prevent a single session

from being viewed within multiple tabs inside the same browser. This value

can be overridden by using the ANCHORE_ALLOW_SHARED_LOGIN environment

variable.

The (optional) redis_flushdb key specifies if the Redis datastore containing

user session keys and data is emptied on application startup. If the datastore

is flushed, any users with active sessions will be required to

re-authenticate.

If this property is unset, or is set to anything other than a Boolean, the web

service will default to True. This value can be overridden by using the

ANCHORE_REDIS_FLUSHDB environment variable.

The (optional) custom_links key allows a list of up to 10 external links to

be provided (additional items will be excluded). The top-level title key

provided the label for the menu (if present, otherwise the string “Custom

External Links” will be used instead).

Each link entry must have a title of greater than 0-length and a valid URI. If

either item is invalid, the entry will be excluded.

custom_links:

title: Custom External Links

links:

- title: Example Link 1

uri: https://example.com

- title: Example Link 2

uri: https://example.com

- title: Example Link 3

uri: https://example.com

- title: Example Link 4

uri: https://example.com

- title: Example Link 5

uri: https://example.com

- title: Example Link 6

uri: https://example.com

- title: Example Link 7

uri: https://example.com

- title: Example Link 8

uri: https://example.com

- title: Example Link 9

uri: https://example.com

- title: Example Link 10

uri: https://example.com

The (optional) force_websocket key specifies if the WebSocket protocol must

be used for socket message communications. By default, long-polling is

initially used to establish the handshake between client and web service,

followed by a switch to WS if the WebSocket protocol is supported.

If this value is unset, or is set to anything other than a Boolean, the web

service will default to False.

This value can be overridden by using the ANCHORE_FORCE_WEBSOCKET

environment variable.

The (optional) authentication_lock keys specify if a user should be

temporarily prevented from logging in to an account after one or more failed

authentication attempts. For this feature to be enabled, both values must be

whole numbers greater than 0. They can be overridden by using the

ANCHORE_AUTHENTICATION_LOCK_COUNT and ANCHORE_AUTHENTICATION_LOCK_EXPIRES

environment variables.

The count value represents the number of failed authentication attempts

allowed to take place before a temporary lock is applied to the username. The

expires value represents, in seconds, how long the lock will be applied for.

Note that, for security reasons, when this feature is enabled it will be

applied to any submitted username, regardless of whether the user exists.

authentication_lock:

count: 5

expires: 300

The (optional) enable_add_repositories key specifies if repositories can be

added via the application interface by either administrative users or standard

users. In the absence of this key, the default is True. When enabled, this

property also suppresses the availability of the Watch Repository toggle

associated with any repository entries displayed in the Artifact Analysis

view.

Note that in the absence of one or all of the properties, the default is also

True. Thus, this key, and a child key corresponding to an account type (that

is itself explicitly set to False) must be set for the feature to be

disabled for that account.

enable_add_repositories:

admin: True

standard: True

The (optional) ldap_timeout and ldap_connect_timeout keys respectively

specify the time (in milliseconds) the LDAP client should let operations stay

alive before timing out, and the time (in milliseconds) the LDAP client should

wait before timing out on TCP connections. Each value must be a whole number

greater than 0.

When these values are unset (or set incorrectly) the app will fall back to

using a default value of 6000 milliseconds. The same default is used when

the keys are not enabled.

These value can be overridden by using the ANCHORE_LDAP_AUTH_TIMEOUT and

ANCHORE_LDAP_AUTH_CONNECT_TIMEOUT environment variables.

ldap_timeout: 6000

ldap_connect_timeout: 6000

The (optional) custom_message key allows you to provide a message that will

be displayed on the application login page below the Username and

Password fields. The key value must be an object that contains:

- A

title key, whose string value provides a title for the message—which can

be up to 100 characters - A

message key, whose string value is the message itself—which can be up to

500 characters

custom_message:

title:

"Title goes here..."

message:

"Message goes here..."

Note: Both title and message values must be present and contain at

least 1 character for the message box to be displayed. If either value

exceeds the character limit, the string will be truncated with an ellipsis.

The (optional) log_level key allows you to set the descriptive detail of the

application log output. The key value must be a string selected from the

following priority-ordered list:

Once set, each level will automatically include the output for any levels

above it—for example, info will include the log output for details at the

warn and error details, whereas error will only show error output.

This value can be overridden by using the ANCHORE_LOG_LEVEL environment

variable. When no level is set, either within this configuration file or by

the environment variable, a default level of http is used.

The (optional) enrich_inventory_view key allows you to set whether the

Kubernetes feature should aggregate and include compliance and

vulnerability data from the reports service. Setting this key to be False

can increase performance on high-volume systems.

This value can be overridden by using the ANCHORE_ENRICH_INVENTORY_VIEW

environment variable. When no flag is set, either within this configuration

file or by the environment variable, a default setting of True is used.

enrich_inventory_view: True

The (optional) enable_prometheus_metrics key enables exporting monitoring

metrics to Prometheus. The metrics are made available on the /metrics

endpoint.

This value can be overridden by using the ANCHORE_ENABLE_METRICS environment

variable. When no flag is set, either within this configuration file or by the

environment variable, a default setting of False is used.

enable_prometheus_metrics: False

The (optional) banners key allows you to provide messages that

will be displayed as a banner at the top and/or bottom of the application

or only the login page. You can set either or both banners. Each banner

is defined by a key that contains an object with the following properties:

text (string): The message to be displayed in the banner. This can be

up to 2000 characters long.text_color (string): The color of the text in the banner.background_color (string): The background color of the banner.display (string): The display condition for the banner. This can be set

to always or login-only. If not specified, the default is

always. The login-only option will only display the banner on the

login page.

banners:

top:

text: "Custom message for the top banner..."

text_color: ""

background_color: ""

display: "always"

bottom:

text: "Custom message for the bottom banner..."

text_color: ""

background_color: ""

display: "login-only"

Note:

- The

text and value must be present and contain at

least 1 character for the message box to be displayed. If the value

exceeds the character limit, the string will be truncated with an ellipsis

with the full text available to view on hover. - The

text_color and background_color values can be any

valid CSS color format, including hex codes (e.g., #FF5733),

RGB (e.g., rgb(255, 87, 51)), or color names (e.g., red).

Sections of the UI configuration settings can now be found from within the UI itself! Navigate to the ‘System’ heading in the sidebar and then select ‘Configuration’. Here you will see some of the exposed configuration options:

NOTE: The latest default UI configuration file can always be extracted from

the Enterprise UI container to review the latest options, environment overrides

and descriptions of each option using the following process:

docker login

docker pull docker.io/anchore/enterprise-ui:latest

docker create --name aui docker.io/anchore/enterprise-ui:latest

docker cp aui:/config/config-ui.yaml /tmp/my-config-ui.yaml

docker rm aui

cat /tmp/my-config-ui.yaml

...

...

2.1 - Using Dashboard

Overview

The Dashboard is your configurable landing page where insights into the collective status of your container image environment can be displayed through various widgets. Utilizing the Enterprise Reporting Service, the widgets are hydrated with metrics which are generated and updated on a cycle, the duration of which is determined by application configuration.

Note: Because the reporting data cycle is configurable, the results shown in this view may not precisely reflect actual analysis output at any given time.

For more information on how to modify this cycle or the Reporting Service in general, please refer to the Reporting Service.

The following sections in this document describe how to add widgets to the dashboard and how to customize the dashboard layout to your preference.

To add a new widget, click the Add New Widget button present in the Dashboard view. Or, if no widgets are defined, click the Let’s add one! button shown.

Upon doing so, a modal will appear with several properties described below:

| Property | Description |

|---|

| Name | The name shown within the Widget’s header. |

| Mode | ‘Compact’ a widget to keep data easily digestable at a glance. ‘Expand’ to view how your data has evolved over a configurable time period. |

| Collection | The collection of tags you’re interested in. Toggle to view metrics for all tags - including historical ones. |

| Time Series Settings | The time period you wish to view metrics for within the expanded mode. |

| Type | The category of information such as ‘Vulnerabilities’ or ‘Policy Evaluations’ which denotes what metrics are capable of being shown. |

| Metrics | The list of metrics available based on Type. |

Once you enter the required properties and click OK, the widget will be created and any metrics needed to hydrate your Dashboard will be fetched and updated.

Note: All fields except Type are editable by clicking the button shown on the top right of the header when hovering over a widget.

Viewing Results

The Reporting Service at its core aggregates data on resources across accounts and generates metrics. These metrics, in turn, fuel the Dashboard’s mission to provide actionable items straight to the user - that’s you!

Leverage these results to dive into the exact reason for a failed policy evaluation or the cause and fix of a critical vulnerability.

Vulnerabilities

Vulnerabilities are grouped and colored by severity. Critical, High, and Medium vulnerabilities are included by default but you can toggle which ones are relevant to your interests by clicking the button.

Clicking one of these metrics navigates you to a view (shown below) where you can browse the filtered set of vulnerabilities matching that severity.

For more info on a particular vulnerability, click on its corresponding button visible in the Links column. To view the exact tags affected, drill down to a specific repository by expanding the arrows.

View that tag’s in-depth analysis by clicking on the value within its Image Digest column.

Policy Evaluations

Policy Evaluations are grouped by their evaluation outcome such as Pass or Fail and can be further filtered by the reason for that result. All reasons are included by default but as with other widget properties, they can be edited by clicking the button.

Clicking one of these results navigates you to a view (shown below) where you can browse the affected and filtered set of tags.

Dig down to a specific tag by expanding the arrow shown on the left side of the row.

Navigate using the Image Digest value to view even more info such as the specific policy being triggered and what exactly is triggering it. If you’re interested in viewing the contents of your policy, click on the Policy ID value.

Dashboard Configuration

After populating your Dashboard with various widgets, you can easily modify the layout by using some methods explained below:

Click this icon shown in the top right or the header of a widget to Drag and Drop it into a new location.

Shown in the bottom right of a widget, click and hold this icon to Resize it.

Click this icon shown in the top right of a widget to Expand it and include a graphical representation of how your data has evolved over a time period of your choice.

Click this icon shown in the top right of a widget to Compact it into an easily digestable view of the metrics you’re interested in.

lick this icon shown in the top right of a widget to Delete it from the dashboard.

3 - Configuring AnchoreCTL

The anchorectl command can be configured with command-line arguments, environment variables, and/or a configuration file. Typically, a configuration file should be created to set any static configuration parameters (your Anchore Enterprise’s URL, logging behavior, cataloger configurations, etc), so that invocations of the tool only require you to provide command-specific parameters as environment/cli options. However, to fully support stateless scripting, a configuration file is not strictly required (settings can be put in environment/cli options).

Important AnchoreCTL is version-aligned with Anchore Enterprise for major/minor. Please refer to the Enterprise Release Notes for the supported version of AnchoreCTL.

The anchorectl tool will search for an available configuration file using the following search order, until it finds a match:

- .anchorectl.yaml

- anchorectl.yaml

- .anchorectl/config.yaml

- ~/.anchorectl.yaml

- ~/anchorectl.yaml

- $XDG_CONFIG_HOME/anchorectl/config.yaml

Note The anchorectl can also utilize inline Environment Variables which override any configuration file settings.

Note The ANCHORECTL_CONFIG environment variable can be used to specify a custom location for the AnchoreCTL YAML file.

For the most basic functional invocation of anchorectl, the only required parameters are listed below:

url: "" # the URL to the Anchore Enterprise API (env var: "ANCHORECTL_URL")

username: "" # the Anchore Enterprise username (env var: "ANCHORECTL_USERNAME")

password: "" # the Anchore Enterprise user's login password (env var: "ANCHORECTL_PASSWORD")

For example, with our Docker Compose quickstart deployment of Anchore Enterprise running on your local system, your ~/.anchorectl.yaml would look like the following

url: "http://localhost:8228"

username: "admin"

password: "yourstrongpassword"

A good way to quickly test that your anchorectl client is ready to use against a deployed and running Anchore Enterprise endpoint is to exercise the system status call, which will display status information fetched from your Enterprise deployment. With ~/.anchorectl.yaml installed and populated correctly, no environment or parameters are required:

anchorectl system status

✔ Status system

┌─────────────────┬────────────────────┬─────────────────────────────┬──────┬────────────────┬────────────┬──────────────┐

│ SERVICE │ HOST ID │ URL │ UP │ STATUS MESSAGE │ DB VERSION │ CODE VERSION │

├─────────────────┼────────────────────┼─────────────────────────────┼──────┼────────────────┼────────────┼──────────────┤

│ analyzer │ anchore-quickstart │ http://analyzer:8228 │ true │ available │ 5230 │ 5.23.0 │

│ policy_engine │ anchore-quickstart │ http://policy-engine:8228 │ true │ available │ 5230 │ 5.23.0 │

│ apiext │ anchore-quickstart │ http://api:8228 │ true │ available │ 5230 │ 5.23.0 │

│ reports │ anchore-quickstart │ http://reports:8228 │ true │ available │ 5230 │ 5.23.0 │

│ reports_worker │ anchore-quickstart │ http://reports-worker:8228 │ true │ available │ 5230 │ 5.23.0 │

│ data_syncer │ anchore-quickstart │ http://data-syncer:8228 │ true │ available | 5230 │ 5.23.0 │

│ simplequeue │ anchore-quickstart │ http://queue:8228 │ true │ available │ 5230 │ 5.23.0 │

│ notifications │ anchore-quickstart │ http://notifications:8228 │ true │ available │ 5230 │ 5.23.0 │

│ catalog │ anchore-quickstart │ http://catalog:8228 │ true │ available │ 5230 │ 5.23.0 │

└─────────────────┴────────────────────┴─────────────────────────────┴──────┴────────────────┴────────────┴──────────────┘

Congratulations you should now have a working AnchoreCTL.

Using Environment Variables

For some use cases being able to supply inline environment variables can be useful, see the following system status call as an example.

ANCHORECTL_URL="http://localhost:8228" ANCHORECTL_USERNAME="admin" ANCHORECTL_PASSWORD="foobar" anchorectl system status

✔ Status system

┌─────────────────┬────────────────────┬─────────────────────────────┬──────┬────────────────┬────────────┬──────────────┐

│ SERVICE │ HOST ID │ URL │ UP │ STATUS MESSAGE │ DB VERSION │ CODE VERSION │

├─────────────────┼────────────────────┼─────────────────────────────┼──────┼────────────────┼────────────┼──────────────┤

│ reports │ anchore-quickstart │ http://reports:8228 │ true │ available │ 5230 │ 5.23.0 │

│ analyzer │ anchore-quickstart │ http://analyzer:8228 │ true │ available │ 5230 │ 5.23.0 │

│ notifications │ anchore-quickstart │ http://notifications:8228 │ true │ available │ 5230 │ 5.23.0 │

│ apiext │ anchore-quickstart │ http://api:8228 │ true │ available │ 5230 │ 5.23.0 │

│ policy_engine │ anchore-quickstart │ http://policy-engine:8228 │ true │ available │ 5230 │ 5.23.0 │

│ reports_worker │ anchore-quickstart │ http://reports-worker:8228 │ true │ available │ 5230 │ 5.23.0 │

│ simplequeue │ anchore-quickstart │ http://queue:8228 │ true │ available │ 5230 │ 5.23.0 │

│ catalog │ anchore-quickstart │ http://catalog:8228 │ true │ available │ 5230 │ 5.23.0 │

└─────────────────┴────────────────────┴─────────────────────────────┴──────┴────────────────┴────────────┴──────────────┘

All the environment variable options can be seen by using anchorectl --help

Using API Keys

If you do not want to expose your private credentials in the configuration file, you can generate an API Key that allows most of the functionality of anchorectl.

Please see Generating API Keys

Once you generate the API Key, the UI will give you a key value. You can use this key with the anchorectl configuration:

url: "http://localhost:8228"

username: "_api_key"

password: <API Key Value>

NOTE: API Keys authenticate using HTTP basic auth. The username for API keys has to be _api_key.

Without setting up ~/.anchorectl.yaml or any configuration file, you can interact using environment variables:

Using Distributed Analysis Mode

If you intend to use anchorectl in Distributed Analysis mode, then you’ll need to enable two additional catalogers (secret-search, and file-contents) to mirror the behavior of Anchore Enterprise defaults, when performing an image analysis in Centralized Analysis mode. Below are the ~/.anchorectl.yaml settings to mirror the Anchore Enterprise defaults.

secret-search:

additional-patterns:

cataloger:

enabled: true

scope: Squashed

additional-patterns: {}

exclude-pattern-names: []

reveal-values: false

skip-files-above-size: 10000

content-search:

cataloger:

enabled: false

scope: Squashed

patterns: {}

reveal-values: false

skip-files-above-size: 10000

file-contents:

cataloger:

enabled: true

scope: Squashed

skip-files-above-size: 1048576

globs: ['/etc/passwd']

Note

anchorectl, when used in distributed analysis mode, already contains built-in regex patterns for AWS access keys, private keys and secret keys. You need to make sure you have the cataloger.enabled value set to true so that the cataloger is enabled.You can set further Regex searches up if you would like to do any additional searches by using the secret-search.additional-patterns value. Under the additional patterns header, you can use Go’s standard regular expression formatting to specify patterns. You can use this link as a reference to assist with writing these searches: https://github.com/google/re2/wiki/Syntax.

For more information on using anchorectl in Distributed Analysis mode, see Concepts: Image Analysis and AnchoreCTL Usage: Images.

Using Container Registry Authentication

When using distributed analysis with –from registry if the registry/repository requires authentication it can be supplied as follows:

ANCHORECTL_REGISTRY_AUTH_AUTHORITY=docker.io ANCHORECTL_REGISTRY_AUTH_USERNAME=dhusername ANCHORECTL_REGISTRY_AUTH_PASSWORD=XXXXXXXXXXX anchorectl image add --from registry docker.io/anchore/enterprise:v5

Using Help & Debug Modes

The anchorectl tool has extensive built-in help information for each command and operation, with many of the parameters allowing for environment overrides. To start with anchorectl, you can run the command with --help to see all the operation sections available:

A convenient way to see your changes taking effect is to instruct anchorectl to output DEBUG level logs to the screen using the -vv flag, which will display the full configuration that the tool is using (including the options you set, plus all the defaults and additional configuration file options available).

NOTE: if you would like to capture the full default configuration as displayed when running with -vv, you can paste that output as the contents of your .anchorectl.yaml, and then work with the settings for full control.

If you need any more help, please learn about Verifying Service Health

Using a Forward-Proxy

AnchoreCTL can be configured to use a forward-proxy as follows.

Windows

set http_proxy=server:port

set https_proxy=server:port

Please note that if your proxy uses an untrusted certificate you may also need the following:

set ANCHORECTL_HTTP_TLS_INSECURE=true

Mac/Linux

export http_proxy=http://yourproxy.com:port

export https_proxy=http://yourproxy.com:port

# or

export https_proxy=https://yourproxy.com:port

Please note that if your proxy uses an untrusted certificate you may also need the following:

export ANCHORECTL_HTTP_TLS_INSECURE=true

4 - Using the Analysis Archive

As mentioned in concepts, there are two locations for image analysis to be stored:

- The working set: the standard state after analysis completes. In this location, the image is fully loaded and available for policy evaluation, content, and vulnerability queries.

- The archive set: a location to keep image analysis data that cannot be used for policy evaluation or queries but can use cheaper storage and less db space and can be reloaded into the working set as needed.

Working with the Analysis Archive

List archived images:

anchorectl archive image list

✔ Fetched archive-images

┌─────────────────────────────────────────────────────────────────────────┬────────────────────────┬──────────┬──────────────┬──────────────────────┐

│ IMAGE DIGEST │ TAGS │ STATUS │ ARCHIVE SIZE │ ANALYZED AT │

├─────────────────────────────────────────────────────────────────────────┼────────────────────────┼──────────┼──────────────┼──────────────────────┤

│ sha256:89020cd33be2767f3f894484b8dd77bc2e5a1ccc864350b92c53262213257dfc │ docker.io/nginx:latest │ archived │ 1.4 MB │ 2022-08-23T21:08:29Z │

└─────────────────────────────────────────────────────────────────────────┴────────────────────────┴──────────┴──────────────┴──────────────────────┘

To add an image to the archive, use the digest. All analysis, policy evaluations, and tags will be added to the archive.

NOTE: this does not remove it from the working set. To fully move it you must first archive and then delete image in the working set using AnchoreCTL or the API directly.

Archiving Images

Archiving an image analysis creates a snapshot of the image’s analysis data, policy evaluation history, and tags and stores in a different storage location and

different record location than working set images.

anchorectl image list

✔ Fetched images

┌───────────────────────────────────────────────────────┬─────────────────────────────────────────────────────────────────────────┬──────────┬────────┐

│ TAG │ DIGEST │ ANALYSIS │ STATUS │

├───────────────────────────────────────────────────────┼─────────────────────────────────────────────────────────────────────────┼──────────┼────────┤

│ docker.io/ubuntu:latest │ sha256:33bca6883412038cc4cbd3ca11406076cf809c1dd1462a144ed2e38a7e79378a │ analyzed │ active │

│ docker.io/ubuntu:latest │ sha256:42ba2dfce475de1113d55602d40af18415897167d47c2045ec7b6d9746ff148f │ analyzed │ active │

│ docker.io/localimage:latest │ sha256:74c6eb3bbeb683eec0b8859bd844620d0b429a58d700ea14122c1892ae1f2885 │ analyzed │ active │

│ docker.io/nginx:latest │ sha256:89020cd33be2767f3f894484b8dd77bc2e5a1ccc864350b92c53262213257dfc │ analyzed │ active │

└───────────────────────────────────────────────────────┴─────────────────────────────────────────────────────────────────────────┴──────────┴────────┘

# anchorectl archive image add sha256:89020cd33be2767f3f894484b8dd77bc2e5a1ccc864350b92c53262213257dfc

✔ Added image to archive

┌─────────────────────────────────────────────────────────────────────────┬──────────┬────────────────────────┐

│ DIGEST │ STATUS │ DETAIL │

├─────────────────────────────────────────────────────────────────────────┼──────────┼────────────────────────┤

│ sha256:89020cd33be2767f3f894484b8dd77bc2e5a1ccc864350b92c53262213257dfc │ archived │ Completed successfully │

└─────────────────────────────────────────────────────────────────────────┴──────────┴────────────────────────┘

Then to delete it in the working set (optionally):

NOTE: You may need to use –force if the image is the newest of its tags and has active subscriptions_

anchorectl image delete sha256:89020cd33be2767f3f894484b8dd77bc2e5a1ccc864350b92c53262213257dfc --force

┌─────────────────────────────────────────────────────────────────────────┬──────────┐

│ DIGEST │ STATUS │

├─────────────────────────────────────────────────────────────────────────┼──────────┤

│ sha256:89020cd33be2767f3f894484b8dd77bc2e5a1ccc864350b92c53262213257dfc │ deleting │

└─────────────────────────────────────────────────────────────────────────┴──────────┘

At this point the image in the archive only.

Restoring images from the archive into the working set

This will not delete the archive entry, only add it back to the working set. Restore and image to working set from archive:

anchorectl archive image restore sha256:89020cd33be2767f3f894484b8dd77bc2e5a1ccc864350b92c53262213257dfc

✔ Restore image

┌────────────────────────┬─────────────────────────────────────────────────────────────────────────┬──────────┬────────┐

│ TAG │ DIGEST │ ANALYSIS │ STATUS │

├────────────────────────┼─────────────────────────────────────────────────────────────────────────┼──────────┼────────┤

│ docker.io/nginx:latest │ sha256:89020cd33be2767f3f894484b8dd77bc2e5a1ccc864350b92c53262213257dfc │ analyzed │ active │

└────────────────────────┴─────────────────────────────────────────────────────────────────────────┴──────────┴────────┘

To view the restored image:

anchorectl image get sha256:89020cd33be2767f3f894484b8dd77bc2e5a1ccc864350b92c53262213257dfc

Tag: docker.io/nginx:latest

Digest: sha256:89020cd33be2767f3f894484b8dd77bc2e5a1ccc864350b92c53262213257dfc

ID: 2b7d6430f78d432f89109b29d88d4c36c868cdbf15dc31d2132ceaa02b993763

Analysis: analyzed

Status: active

Working with Archive rules

As with all AnchoreCTL commands, the --help option will show the arguments, options and descriptions of valid values.

List existing rules:

anchorectl archive rule list

✔ Fetched rules

┌──────────────────────────────────┬────────────┬──────────────┬────────────────────┬────────────┬─────────┬───────┬──────────────────┬──────────────┬─────────────┬──────────────────┬────────┬──────────────────────┐

│ ID │ TRANSITION │ ANALYSIS AGE │ TAG VERSIONS NEWER │ REGISTRY │ REPO │ TAG │ REGISTRY EXCLUDE │ REPO EXCLUDE │ TAG EXCLUDE │ EXCLUDE EXP DAYS │ GLOBAL │ LAST UPDATED │

├──────────────────────────────────┼────────────┼──────────────┼────────────────────┼────────────┼─────────┼───────┼──────────────────┼──────────────┼─────────────┼──────────────────┼────────┼──────────────────────┤

│ 2ca9284202814f6aa41916fd8d21ddf2 │ archive │ 90d │ 90 │ * │ * │ * │ │ │ │ -1 │ false │ 2022-08-19T17:58:38Z │

│ 6cb4011b102a4ba1a86a5f3695871004 │ archive │ 90d │ 90 │ foobar.com │ myimage │ mytag │ barfoo.com │ * │ * │ -1 │ false │ 2022-08-22T18:47:32Z │

└──────────────────────────────────┴────────────┴──────────────┴────────────────────┴────────────┴─────────┴───────┴──────────────────┴──────────────┴─────────────┴──────────────────┴────────┴──────────────────────┘

Add a rule:

anchorectl archive rule add --transition archive --analysis-age-days 90 --tag-versions-newer 1 --selector-registry 'docker.io' --selector-repository 'library/*' --selector-tag 'latest'

✔ Added rule

ID: 0031546b9ce94cf0ae0e60c0f35b9ea3

Transition: archive

Analysis Age: 90d

Tag Versions Newer: 1

Selector:

Registry: docker.io

Repo: library/*

Tag: latest

Exclude:

Selector:

Registry Exclude:

Repo Exclude:

Tag Exclude:

Exclude Exp Days: -1

Global: false

Last Updated: 2022-08-24T22:57:51Z

The required parameters are: minimum age of analysis in days, number of tag versions newer, and the transition to use.

There is also an optional --system-global flag available for admin account users that makes the rule apply to all accounts

in the system.

As a non-admin user you can see global rules but you cannot update/delete them (will get a 404):

#ANCHORECTL_USERNAME=test1user ANCHORECTL_PASSWORD=password ANCHORECTL_ACCOUNT=test1acct anchorectl archive rule list

✔ Fetched rules

┌──────────────────────────────────┬────────────┬──────────────┬────────────────────┬───────────┬───────────┬────────┬──────────────────┬──────────────┬─────────────┬──────────────────┬────────┬──────────────────────┐

│ ID │ TRANSITION │ ANALYSIS AGE │ TAG VERSIONS NEWER │ REGISTRY │ REPO │ TAG │ REGISTRY EXCLUDE │ REPO EXCLUDE │ TAG EXCLUDE │ EXCLUDE EXP DAYS │ GLOBAL │ LAST UPDATED │

├──────────────────────────────────┼────────────┼──────────────┼────────────────────┼───────────┼───────────┼────────┼──────────────────┼──────────────┼─────────────┼──────────────────┼────────┼──────────────────────┤

│ 16dc38cef54e4ce5ac87d00e90b4a4f2 │ archive │ 90d │ 1 │ docker.io │ library/* │ latest │ │ │ │ -1 │ true │ 2022-08-24T23:01:05Z │

└──────────────────────────────────┴────────────┴──────────────┴────────────────────┴───────────┴───────────┴────────┴──────────────────┴──────────────┴─────────────┴──────────────────┴────────┴──────────────────────┘

# ANCHORECTL_USERNAME=test1user ANCHORECTL_PASSWORD=password ANCHORECTL_ACCOUNT=test1acct anchorectl archive rule delete 16dc38cef54e4ce5ac87d00e90b4a4f2

⠙ Deleting rule

error: 1 error occurred:

* unable to delete rule:

{

"detail": {

"error_codes": []

},

"httpcode": 404,

"message": "Rule not found"

}

# ANCHORECTL_USERNAME=test1user ANCHORECTL_PASSWORD=password ANCHORECTL_ACCOUNT=test1acct anchorectl archive rule get 16dc38cef54e4ce5ac87d00e90b4a4f2

✔ Fetched rule

ID: 16dc38cef54e4ce5ac87d00e90b4a4f2

Transition: archive

Analysis Age: 90d

Tag Versions Newer: 1

Selector:

Registry: docker.io

Repo: library/*

Tag: latest

Exclude:

Selector:

Registry Exclude:

Repo Exclude:

Tag Exclude:

Exclude Exp Days: -1

Global: true

Last Updated: 2022-08-24T23:01:05Z

Delete a rule:

anchorectl archive rule delete 16dc38cef54e4ce5ac87d00e90b4a4f2

✔ Deleted rule

No results

5 - Artifact Lifecycle Policies

Artifact Lifecycle Policies are instruction sets which perform lifecycle events on certain types of artifacts.

Each policy can perform an action on a given artifact_type based on configured policy_conditions (rules/selectors).

As an example, a system administrator may create an Artifact Lifecycle Policy that will automatically delete any image that has an analysis date older than 180

days.

WARNING ⚠️

- ⚠️ These policies have the ability to delete data without archive/backup. Proceed with caution!

- ⚠️ These policies are GLOBAL they will impact every account on the system.

- ⚠️ These policies can only be created and managed by a system administrator.

Policy Components

Artifact Lifecycle Policies are global policies that will execute on a schedule defined by a cycle_timer within the

catalog service. services.catalog.cycle_timers.artifact_lifecycle_policy_tasks has a default time of every 12 hours.

The policy is constructed with the following parameters:

Artifacts Types - The type of artifacts the policy will consider. The current supported type is image.

Inclusion Rules - The set of criteria which will be used to determine the set of artifacts to work on.

All criteria must be satisfied for the policy to enact on an artifact.

- days_since_analyzed

- Selects artifacts whose

analyzed_at date is n days old. - If this value is set to less than zero, this rule is disabled.

- An artifact that has not been analyzed, either because it failed analysis or the analysis is pending, will not be included.

- even_if_exists_in_runtime_inventory

- When

true, an artifact will be included even if it exists in the Runtime Inventory. - When

false, an artifact will not be included if it exists in the Runtime Inventory. Essentially protecting artifacts found in your runtime inventory. Please review the Inventory Time-To-Live for information on how to prune the Runtime Inventory.

- include_base_images

- When

true, images that have ancestral children will be included. - When

false, images that have ancestral children will not be included. - Note: These are evaluated per run. As children are deleted, a previously excluded parent image may too become eligible for deletion.

- include_failed_analysis

- When

true, images that are in a failed analysis state will be included.- Note: When the image does not contain an

analyzed_at date, the created_at date will be used.

- When

false, images that are in a failed analysis state will not be included. This is the default behavior.

Policy Actions - After the policy determines a set of artifacts that satisfy the Inclusion Rules, this

is the action which will be performed on them. The current supported action is delete.

Actioned artifacts will have a matching system Event created for audit and notification purposes.

Policy Interaction

If more than one policy is enabled, each policy will work independently, using its set of rules to determine if any

artifacts satisfy its criteria. Each policy will apply its action on the set of artifacts.

Creating a new Artifact Lifecycle Policy

Due to the potentially destructive nature of these policies every parameter must be explicitly declared when creating a

new policy. This means all policy rules must be explicitly configured or explicitly disabled.

anchorectl system artifact-lifecycle-policy add --action=delete --artifact-type=image --name="example policy" --description=example --enabled=false --days-since-analyzed=30 --even-if-exists-in-runtime=true --include-base-images=false

✔ Added artifact-lifecycle-policy

Name: example lifecycle policy

Policy Conditions:

- artifactType: image

daysSinceAnalyzed: 30

evenIfExistsInRuntimeInventory: true

includeBaseImages: false

version: 1

Uuid: 999ad42e-e467-4251-b80c-6681b79ad4f6

Action: delete

Deleted At:

Enabled: false

Updated At: 2025-08-19T06:35:39Z

Created At: 2025-08-19T06:35:39Z

Description: example

Updating an existing Artifact Lifecycle Policy

anchorectl system artifact-lifecycle-policy update 5620b641-a25f-4b1f-966c-929281a41e16 --action=delete --name=example --artifact-type=image --days-since-analyzed=60 --even-if-exists-in-runtime=false --include-base-images=false

✔ Update artifact-lifecycle-policy

Name: example

Policy Conditions:

- artifactType: image

daysSinceAnalyzed: 60

evenIfExistsInRuntimeInventory: false

includeBaseImages: false

version: 2

Uuid: 5620b641-a25f-4b1f-966c-929281a41e16

Action: delete

Deleted At:

Enabled: false

Updated At: 2023-11-22T13:58:04Z

Created At: 2023-11-22T13:02:24Z

Description: test description

Enabling the Artifact Lifecycle Policy

anchorectl system artifact-lifecycle-policy update 5620b641-a25f-4b1f-966c-929281a41e16 --action=delete --name=example --artifact-type=image --days-since-analyzed=60 --even-if-exists-in-runtime=false --enabled=true --include-base-images=false

✔ Update artifact-lifecycle-policy

Name: example

Policy Conditions:

- artifactType: image

daysSinceAnalyzed: 60

evenIfExistsInRuntimeInventory: false

includeBaseImages: false

version: 2

Uuid: 5620b641-a25f-4b1f-966c-929281a41e16

Action: delete

Deleted At:

Enabled: true

Updated At: 2023-11-22T13:58:04Z

Created At: 2023-11-22T13:02:24Z

Description: test description

List Artifact Lifecycle Policies

anchorectl system artifact-lifecycle-policy list

✔ Fetched artifact-lifecycle-policies

Items:

- action: delete

createdAt: "2023-11-22T13:02:24Z"

description: example description

enabled: true

name: "example policy"

policyConditions:

- artifactType: image

daysSinceAnalyzed: 1

evenIfExistsInRuntimeInventory: true

includeBaseImages: false

version: 2

updatedAt: "2023-11-22T13:02:24Z"

uuid: 5620b641-a25f-4b1f-966c-929281a41e16

Get specific Artifact Lifecycle Policy

Note: it is possible to request “deleted” policies through this API for audit reasons. The deleted_at field will be null, and enabled will be true if the policy is active.

anchorectl system artifact-lifecycle-policy get 5620b641-a25f-4b1f-966c-929281a41e16

✔ Fetched artifact-lifecycle-policy

Name: 2023-11-22T13:02:24.621Z

Policy Conditions:

- artifactType: image

daysSinceAnalyzed: 1

evenIfExistsInRuntimeInventory: true

includeBaseImages: false

version: 1

Uuid: 5620b641-a25f-4b1f-966c-929281a41e16

Action: delete

Deleted At:

Enabled: true

Updated At: 2023-11-22T13:02:24Z

Created At: 2023-11-22T13:02:24Z

Description: test description

Delete a policy

Note: for the purposes of audit the policy will still remain in the system. It will be disabled and marked deleted. This will effectively make it hidden unless explicitly requested by its UUID through the API.

anchorectl system artifact-lifecycle-policy delete 73226831-9140-4d27-a922-4a61e43dbb0d

✔ Deleted artifact-lifecycle-policy

No results

6 - Data Synchronization

Introduction

In this section, you’ll learn how Anchore Enterprise ingests the data used for analysis and vulnerability management.

Your Anchore Enterprise deployment uses five datasets that are managed by the Anchore Data Service.

These datasets are automatically synced to your Anchore Enterprise deployment by the Data Syncer Service.

The datasets are:

- Vulnerability Database (vulnerability_db)

- Vulnerability Match Exclusions Database (vulnerability_match_exclusions_db)

- ClamAV Malware Database (clamav_db)

- STIG Profiles (stig_profile_db)

If your deployment is air-gapped, please review the Air-gapped Deployment

documentation for instructions on how to manually sync these datasets.

Please review the Anchore Data Service status page for

information on how to check the status of the datasets.

Requirements

Network Ingress

The following FQDN will need to be allowlisted in your network to allow the Data Syncer Service to communicate with the Anchore Data Service:

https://data.anchore-enterprise.com

Ideally the endpoint can be whitelisted via a layer 7/proxy.

If you are filtering based on IP, please raise a support ticket with Anchore so that we can provide you with the IP addresses.

6.1 - Data Syncer Configuration

Dataset Synchronization Interval

The Data Syncer Service will check every hour if there is new data available from the Anchore Data Service.

If it finds a new dataset then it will sync it down immediately.

It will also trigger the Policy Engine Service to reprocess the data to make it available for policy evaluations. The analyzer checks the

data syncer for a new ClamAV Malware signature database before every malware scan (if enabled).

Controlling Which Feeds and Groups are Synced

During initial data sync, you can always query the progress and status of the feed sync using anchorectl.

anchorectl feed list

✔ List feed

┌────────────────────────────────────────────┬────────────────────┬─────────┬──────────────────────┬──────────────┐

│ FEED │ GROUP │ ENABLED │ LAST UPDATED │ RECORD COUNT │

├────────────────────────────────────────────┼────────────────────┼─────────┼──────────────────────┼──────────────┤

│ ClamAV Malware Database │ clamav_db │ true │ 2024-09-26T13:13:50Z │ 1 │

│ Vulnerabilities │ github:composer │ true │ 2024-09-26T12:14:50Z │ 4036 │

│ Vulnerabilities │ github:dart │ true │ 2024-09-26T12:14:50Z │ 8 │

│ Vulnerabilities │ github:gem │ true │ 2024-09-26T12:14:50Z │ 817 │

│ Vulnerabilities │ github:go │ true │ 2024-09-26T12:14:50Z │ 1875 │

│ Vulnerabilities │ github:java │ true │ 2024-09-26T12:14:50Z │ 5058 │

│ Vulnerabilities │ github:npm │ true │ 2024-09-26T12:14:50Z │ 15586 │

│ Vulnerabilities │ github:nuget │ true │ 2024-09-26T12:14:50Z │ 624 │

│ Vulnerabilities │ github:python │ true │ 2024-09-26T12:14:50Z │ 3226 │

.

.

.

│ CISA KEV (Known Exploited Vulnerabilities) │ kev_db │ true │ 2024-09-26T13:13:47Z │ 1181 │

| Exploit Prediction Scoring System Database │ epss_db │ true │ 2024-11-18T18:04:12Z │ 266565 │

└────────────────────────────────────────────┴────────────────────┴─────────┴──────────────────────┴──────────────┘

Using the Config File to Include/Exclude Feeds and Package Types when scanning for vulnerabilities

With the feed service removed, Enterprise no longer supports excluding certain providers and package types from the vulnerability feed.

To ensure the same experience when using the product, you can now exclude certain providers and package types from matching vulnerabilities.

Using Helm

In your values.yaml file set the following:

policy_engine:

vulnerabilities:

matching:

exclude:

providers: ["rhel","debian"]

package_types: ["rpm"]

Using Docker Compose

In your config.yaml file set the following:

services:

policy_engine:

vulnerabilities:

matching:

exclude:

providers: ["rhel","debian"]

package_types: ["rpm"]

Further information can be found in Vulnerability Management.

6.2 - Data Synchronization

When Anchore Enterprise runs, the Data Syncer Service will begin to synchronize security feed data from the Anchore Data Service.

CVE data for Linux distributions such as Alpine, CentOS, Debian, Oracle, Red Hat and Ubuntu will be downloaded.

The initial sync typically take anywhere from 1-5 minutes depending on your environment and network speed. After that the Data Syncer Service will check every hour if there is new data available from the Anchore Data Service. If it finds a new dataset then it will sync it down immediately.

For air-gapped environments, please see the Air-Gapped documentation.

Checking Feed Status

Feed information can be retrieved through the API and AnchoreCTL.

anchorectl feed list

✔ List feed

┌────────────────────────────────────────────┬────────────────────┬─────────┬──────────────────────┬──────────────┐

│ FEED │ GROUP │ ENABLED │ LAST UPDATED │ RECORD COUNT │

├────────────────────────────────────────────┼────────────────────┼─────────┼──────────────────────┼──────────────┤

│ ClamAV Malware Database │ clamav_db │ true │ 2024-09-26T13:13:50Z │ 1 │

│ Vulnerabilities │ github:composer │ true │ 2024-09-26T12:14:50Z │ 4036 │

│ Vulnerabilities │ github:dart │ true │ 2024-09-26T12:14:50Z │ 8 │

│ Vulnerabilities │ github:gem │ true │ 2024-09-26T12:14:50Z │ 817 │

│ Vulnerabilities │ github:go │ true │ 2024-09-26T12:14:50Z │ 1875 │

│ Vulnerabilities │ github:java │ true │ 2024-09-26T12:14:50Z │ 5058 │

│ Vulnerabilities │ github:npm │ true │ 2024-09-26T12:14:50Z │ 15586 │

│ Vulnerabilities │ github:nuget │ true │ 2024-09-26T12:14:50Z │ 624 │

│ Vulnerabilities │ github:python │ true │ 2024-09-26T12:14:50Z │ 3226 │

│ Vulnerabilities │ github:rust │ true │ 2024-09-26T12:14:50Z │ 804 │

│ Vulnerabilities │ github:swift │ true │ 2024-09-26T12:14:50Z │ 32 │

│ Vulnerabilities │ msrc:10378 │ true │ 2024-09-26T12:14:49Z │ 2668 │

│ Vulnerabilities │ msrc:10379 │ true │ 2024-09-26T12:14:49Z │ 2645 │

│ Vulnerabilities │ msrc:10481 │ true │ 2024-09-26T12:14:49Z │ 1951 │

│ Vulnerabilities │ msrc:10482 │ true │ 2024-09-26T12:14:49Z │ 2028 │

│ Vulnerabilities │ msrc:10483 │ true │ 2024-09-26T12:14:49Z │ 2822 │

│ Vulnerabilities │ msrc:10484 │ true │ 2024-09-26T12:14:49Z │ 1934 │

│ Vulnerabilities │ msrc:10543 │ true │ 2024-09-26T12:14:49Z │ 2796 │

│ Vulnerabilities │ msrc:10729 │ true │ 2024-09-26T12:14:49Z │ 2908 │

│ Vulnerabilities │ msrc:10735 │ true │ 2024-09-26T12:14:49Z │ 3006 │

│ Vulnerabilities │ msrc:10788 │ true │ 2024-09-26T12:14:49Z │ 466 │

│ Vulnerabilities │ msrc:10789 │ true │ 2024-09-26T12:14:49Z │ 437 │

│ Vulnerabilities │ msrc:10816 │ true │ 2024-09-26T12:14:49Z │ 3328 │

│ Vulnerabilities │ msrc:10852 │ true │ 2024-09-26T12:14:49Z │ 3043 │

│ Vulnerabilities │ msrc:10853 │ true │ 2024-09-26T12:14:49Z │ 3167 │

│ Vulnerabilities │ msrc:10855 │ true │ 2024-09-26T12:14:49Z │ 3300 │

│ Vulnerabilities │ msrc:10951 │ true │ 2024-09-26T12:14:49Z │ 716 │

│ Vulnerabilities │ msrc:10952 │ true │ 2024-09-26T12:14:49Z │ 766 │

│ Vulnerabilities │ msrc:11453 │ true │ 2024-09-26T12:14:49Z │ 1240 │

│ Vulnerabilities │ msrc:11454 │ true │ 2024-09-26T12:14:49Z │ 1290 │

│ Vulnerabilities │ msrc:11466 │ true │ 2024-09-26T12:14:49Z │ 395 │

│ Vulnerabilities │ msrc:11497 │ true │ 2024-09-26T12:14:49Z │ 1454 │

│ Vulnerabilities │ msrc:11498 │ true │ 2024-09-26T12:14:49Z │ 1514 │

│ Vulnerabilities │ msrc:11499 │ true │ 2024-09-26T12:14:49Z │ 981 │

│ Vulnerabilities │ msrc:11563 │ true │ 2024-09-26T12:14:49Z │ 1344 │

│ Vulnerabilities │ msrc:11568 │ true │ 2024-09-26T12:14:49Z │ 2993 │

│ Vulnerabilities │ msrc:11569 │ true │ 2024-09-26T12:14:49Z │ 3095 │

│ Vulnerabilities │ msrc:11570 │ true │ 2024-09-26T12:14:49Z │ 2975 │

│ Vulnerabilities │ msrc:11571 │ true │ 2024-09-26T12:14:49Z │ 3266 │

│ Vulnerabilities │ msrc:11572 │ true │ 2024-09-26T12:14:49Z │ 3238 │

│ Vulnerabilities │ msrc:11583 │ true │ 2024-09-26T12:14:49Z │ 1038 │

│ Vulnerabilities │ msrc:11644 │ true │ 2024-09-26T12:14:49Z │ 1054 │

│ Vulnerabilities │ msrc:11645 │ true │ 2024-09-26T12:14:49Z │ 1089 │

│ Vulnerabilities │ msrc:11646 │ true │ 2024-09-26T12:14:49Z │ 1055 │

│ Vulnerabilities │ msrc:11647 │ true │ 2024-09-26T12:14:49Z │ 1074 │

│ Vulnerabilities │ msrc:11712 │ true │ 2024-09-26T12:14:49Z │ 1442 │

│ Vulnerabilities │ msrc:11713 │ true │ 2024-09-26T12:14:49Z │ 1491 │

│ Vulnerabilities │ msrc:11714 │ true │ 2024-09-26T12:14:49Z │ 1447 │

│ Vulnerabilities │ msrc:11715 │ true │ 2024-09-26T12:14:49Z │ 999 │

│ Vulnerabilities │ msrc:11766 │ true │ 2024-09-26T12:14:49Z │ 912 │

│ Vulnerabilities │ msrc:11767 │ true │ 2024-09-26T12:14:49Z │ 915 │

│ Vulnerabilities │ msrc:11768 │ true │ 2024-09-26T12:14:49Z │ 940 │

│ Vulnerabilities │ msrc:11769 │ true │ 2024-09-26T12:14:49Z │ 934 │

│ Vulnerabilities │ msrc:11800 │ true │ 2024-09-26T12:14:49Z │ 382 │

│ Vulnerabilities │ msrc:11801 │ true │ 2024-09-26T12:14:49Z │ 1277 │

│ Vulnerabilities │ msrc:11802 │ true │ 2024-09-26T12:14:49Z │ 1277 │

│ Vulnerabilities │ msrc:11803 │ true │ 2024-09-26T12:14:49Z │ 981 │

│ Vulnerabilities │ msrc:11896 │ true │ 2024-09-26T12:14:49Z │ 792 │

│ Vulnerabilities │ msrc:11897 │ true │ 2024-09-26T12:14:49Z │ 762 │

│ Vulnerabilities │ msrc:11898 │ true │ 2024-09-26T12:14:49Z │ 763 │

│ Vulnerabilities │ msrc:11923 │ true │ 2024-09-26T12:14:49Z │ 1733 │

│ Vulnerabilities │ msrc:11924 │ true │ 2024-09-26T12:14:49Z │ 1726 │

│ Vulnerabilities │ msrc:11926 │ true │ 2024-09-26T12:14:49Z │ 1536 │

│ Vulnerabilities │ msrc:11927 │ true │ 2024-09-26T12:14:49Z │ 1503 │

│ Vulnerabilities │ msrc:11929 │ true │ 2024-09-26T12:14:49Z │ 1433 │

│ Vulnerabilities │ msrc:11930 │ true │ 2024-09-26T12:14:49Z │ 1429 │

│ Vulnerabilities │ msrc:11931 │ true │ 2024-09-26T12:14:49Z │ 1474 │

│ Vulnerabilities │ msrc:12085 │ true │ 2024-09-26T12:14:49Z │ 1044 │

│ Vulnerabilities │ msrc:12086 │ true │ 2024-09-26T12:14:49Z │ 1053 │

│ Vulnerabilities │ msrc:12097 │ true │ 2024-09-26T12:14:49Z │ 964 │

│ Vulnerabilities │ msrc:12098 │ true │ 2024-09-26T12:14:49Z │ 939 │

│ Vulnerabilities │ msrc:12099 │ true │ 2024-09-26T12:14:49Z │ 943 │

│ Vulnerabilities │ nvd │ true │ 2024-09-26T12:14:58Z │ 263831 │

│ Vulnerabilities │ alpine:3.10 │ true │ 2024-09-26T12:13:37Z │ 2321 │

│ Vulnerabilities │ alpine:3.11 │ true │ 2024-09-26T12:13:37Z │ 2659 │

│ Vulnerabilities │ alpine:3.12 │ true │ 2024-09-26T12:13:37Z │ 3193 │

│ Vulnerabilities │ alpine:3.13 │ true │ 2024-09-26T12:13:37Z │ 3684 │

│ Vulnerabilities │ alpine:3.14 │ true │ 2024-09-26T12:13:37Z │ 4265 │

│ Vulnerabilities │ alpine:3.15 │ true │ 2024-09-26T12:13:37Z │ 4815 │

│ Vulnerabilities │ alpine:3.16 │ true │ 2024-09-26T12:13:37Z │ 5271 │

│ Vulnerabilities │ alpine:3.17 │ true │ 2024-09-26T12:13:37Z │ 5630 │

│ Vulnerabilities │ alpine:3.18 │ true │ 2024-09-26T12:13:37Z │ 6144 │

│ Vulnerabilities │ alpine:3.19 │ true │ 2024-09-26T12:13:37Z │ 6338 │

│ Vulnerabilities │ alpine:3.2 │ true │ 2024-09-26T12:13:37Z │ 305 │

│ Vulnerabilities │ alpine:3.20 │ true │ 2024-09-26T12:13:37Z │ 6428 │

│ Vulnerabilities │ alpine:3.3 │ true │ 2024-09-26T12:13:37Z │ 470 │

│ Vulnerabilities │ alpine:3.4 │ true │ 2024-09-26T12:13:37Z │ 679 │

│ Vulnerabilities │ alpine:3.5 │ true │ 2024-09-26T12:13:37Z │ 902 │

│ Vulnerabilities │ alpine:3.6 │ true │ 2024-09-26T12:13:37Z │ 1075 │

│ Vulnerabilities │ alpine:3.7 │ true │ 2024-09-26T12:13:37Z │ 1461 │

│ Vulnerabilities │ alpine:3.8 │ true │ 2024-09-26T12:13:37Z │ 1671 │

│ Vulnerabilities │ alpine:3.9 │ true │ 2024-09-26T12:13:37Z │ 1955 │

│ Vulnerabilities │ alpine:edge │ true │ 2024-09-26T12:13:37Z │ 6466 │

│ Vulnerabilities │ amzn:2 │ true │ 2024-09-26T12:13:34Z │ 2280 │

│ Vulnerabilities │ amzn:2022 │ true │ 2024-09-26T12:13:34Z │ 276 │

│ Vulnerabilities │ amzn:2023 │ true │ 2024-09-26T12:13:34Z │ 736 │

│ Vulnerabilities │ chainguard:rolling │ true │ 2024-09-26T12:13:19Z │ 4462 │

│ Vulnerabilities │ debian:10 │ true │ 2024-09-26T12:14:52Z │ 32021 │

│ Vulnerabilities │ debian:11 │ true │ 2024-09-26T12:14:52Z │ 33497 │

│ Vulnerabilities │ debian:12 │ true │ 2024-09-26T12:14:52Z │ 32452 │

│ Vulnerabilities │ debian:13 │ true │ 2024-09-26T12:14:52Z │ 31631 │

│ Vulnerabilities │ debian:7 │ true │ 2024-09-26T12:14:52Z │ 20455 │

│ Vulnerabilities │ debian:8 │ true │ 2024-09-26T12:14:52Z │ 24058 │

│ Vulnerabilities │ debian:9 │ true │ 2024-09-26T12:14:52Z │ 28240 │

│ Vulnerabilities │ debian:unstable │ true │ 2024-09-26T12:14:52Z │ 35913 │

│ Vulnerabilities │ mariner:1.0 │ true │ 2024-09-26T12:14:41Z │ 2092 │

│ Vulnerabilities │ mariner:2.0 │ true │ 2024-09-26T12:14:41Z │ 2624 │

│ Vulnerabilities │ ol:5 │ true │ 2024-09-26T12:14:44Z │ 1255 │

│ Vulnerabilities │ ol:6 │ true │ 2024-09-26T12:14:44Z │ 1709 │

│ Vulnerabilities │ ol:7 │ true │ 2024-09-26T12:14:44Z │ 2196 │

│ Vulnerabilities │ ol:8 │ true │ 2024-09-26T12:14:44Z │ 1906 │

│ Vulnerabilities │ ol:9 │ true │ 2024-09-26T12:14:44Z │ 870 │

│ Vulnerabilities │ rhel:5 │ true │ 2024-09-26T12:14:59Z │ 7193 │

│ Vulnerabilities │ rhel:6 │ true │ 2024-09-26T12:14:59Z │ 11121 │

│ Vulnerabilities │ rhel:7 │ true │ 2024-09-26T12:14:59Z │ 11359 │

│ Vulnerabilities │ rhel:8 │ true │ 2024-09-26T12:14:59Z │ 6998 │

│ Vulnerabilities │ rhel:9 │ true │ 2024-09-26T12:14:59Z │ 4039 │

│ Vulnerabilities │ sles:11 │ true │ 2024-09-26T12:14:47Z │ 594 │

│ Vulnerabilities │ sles:11.1 │ true │ 2024-09-26T12:14:47Z │ 6125 │

│ Vulnerabilities │ sles:11.2 │ true │ 2024-09-26T12:14:47Z │ 3291 │

│ Vulnerabilities │ sles:11.3 │ true │ 2024-09-26T12:14:47Z │ 7081 │

│ Vulnerabilities │ sles:11.4 │ true │ 2024-09-26T12:14:47Z │ 6583 │

│ Vulnerabilities │ sles:12 │ true │ 2024-09-26T12:14:47Z │ 6018 │

│ Vulnerabilities │ sles:12.1 │ true │ 2024-09-26T12:14:47Z │ 6205 │

│ Vulnerabilities │ sles:12.2 │ true │ 2024-09-26T12:14:47Z │ 8339 │

│ Vulnerabilities │ sles:12.3 │ true │ 2024-09-26T12:14:47Z │ 10396 │

│ Vulnerabilities │ sles:12.4 │ true │ 2024-09-26T12:14:47Z │ 10215 │

│ Vulnerabilities │ sles:12.5 │ true │ 2024-09-26T12:14:47Z │ 12444 │

│ Vulnerabilities │ sles:15 │ true │ 2024-09-26T12:14:47Z │ 8737 │

│ Vulnerabilities │ sles:15.1 │ true │ 2024-09-26T12:14:47Z │ 9245 │

│ Vulnerabilities │ sles:15.2 │ true │ 2024-09-26T12:14:47Z │ 9572 │

│ Vulnerabilities │ sles:15.3 │ true │ 2024-09-26T12:14:47Z │ 10074 │

│ Vulnerabilities │ sles:15.4 │ true │ 2024-09-26T12:14:47Z │ 10436 │

│ Vulnerabilities │ sles:15.5 │ true │ 2024-09-26T12:14:47Z │ 10880 │

│ Vulnerabilities │ sles:15.6 │ true │ 2024-09-26T12:14:47Z │ 3775 │

│ Vulnerabilities │ ubuntu:12.04 │ true │ 2024-09-26T12:15:12Z │ 14934 │

│ Vulnerabilities │ ubuntu:12.10 │ true │ 2024-09-26T12:15:12Z │ 5641 │

│ Vulnerabilities │ ubuntu:13.04 │ true │ 2024-09-26T12:15:12Z │ 4117 │

│ Vulnerabilities │ ubuntu:14.04 │ true │ 2024-09-26T12:15:12Z │ 37910 │

│ Vulnerabilities │ ubuntu:14.10 │ true │ 2024-09-26T12:15:12Z │ 4437 │

│ Vulnerabilities │ ubuntu:15.04 │ true │ 2024-09-26T12:15:12Z │ 6220 │

│ Vulnerabilities │ ubuntu:15.10 │ true │ 2024-09-26T12:15:12Z │ 6489 │

│ Vulnerabilities │ ubuntu:16.04 │ true │ 2024-09-26T12:15:12Z │ 35057 │

│ Vulnerabilities │ ubuntu:16.10 │ true │ 2024-09-26T12:15:12Z │ 8607 │

│ Vulnerabilities │ ubuntu:17.04 │ true │ 2024-09-26T12:15:12Z │ 9094 │

│ Vulnerabilities │ ubuntu:17.10 │ true │ 2024-09-26T12:15:12Z │ 7900 │

│ Vulnerabilities │ ubuntu:18.04 │ true │ 2024-09-26T12:15:12Z │ 29533 │

│ Vulnerabilities │ ubuntu:18.10 │ true │ 2024-09-26T12:15:12Z │ 8367 │

│ Vulnerabilities │ ubuntu:19.04 │ true │ 2024-09-26T12:15:12Z │ 8634 │

│ Vulnerabilities │ ubuntu:19.10 │ true │ 2024-09-26T12:15:12Z │ 8414 │

│ Vulnerabilities │ ubuntu:20.04 │ true │ 2024-09-26T12:15:12Z │ 25271 │

│ Vulnerabilities │ ubuntu:20.10 │ true │ 2024-09-26T12:15:12Z │ 9974 │

│ Vulnerabilities │ ubuntu:21.04 │ true │ 2024-09-26T12:15:12Z │ 11304 │

│ Vulnerabilities │ ubuntu:21.10 │ true │ 2024-09-26T12:15:12Z │ 12628 │